They might have been called dens of anti-nationalists and good for nothing. But on Monday, the government added another label to Jawaharlal Nehru University and Hyderabad Central University: The best educational institutions in the country.

In its first-ever nationwide ranking of educational institutions and other universities, JNU and HCU came in at the third and fourth positions, with the Indian Institute of Science-Bangalore and the Institute of Chemical Technology taking the top slots. The results were based on a nationwide survey of all centrally funded institutions and other universities commissioned by the Human Resources Development ministry to ascertain their quality of education and graduation outcomes.

This was hailed as a “revolutionary” step in September last year by Human Resource Development Minister Smriti Irani when she launched the new framework.

While the top 10 list contains familiar names such as Delhi University and Jamia Milia Islamia as well, the interesting bit is how exactly these rankings work. The ministry’s aim was to provide an India-centric approach to evaluating institutions in the country, since most of them fail to make it to the top 100 lists in many popular international rankings – something that even the president has expressed concernabout.

For far too long, academics have lamented about the inherent bias in international rankings as they claim that some of these indicators oftendon’t reflect academic prowess of Indian institutions. The new rankings attempt to correct this bias by focusing more on quality of education at the institutions adjudged by experience, qualifications and industry exposure of the faculty.

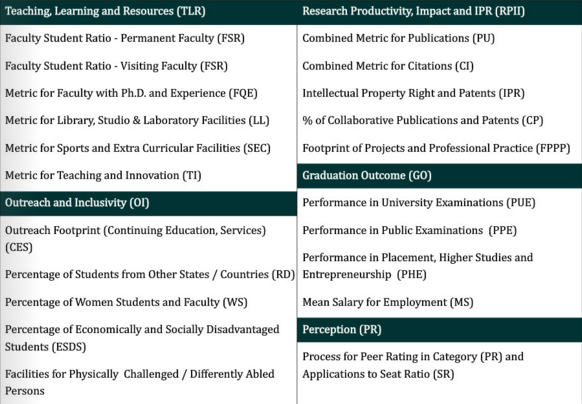

The new rankings are broadly based on five main pillars, divided into a number of sub-heds.

The individual scores under all these heads, when totalled, were used to rank universities based on their overall performance. Other indicators, like perception, were based on online as well as focus group surveys.

Hyderabad University had a much better performance than its peers on indicators such as perception where it scored 98 points out of 100 and on outreach where it got 88 points out of 100. Moreover, JNU scored a perfect 100 on graduation outcomes which measures impact after graduation and placements of students and 98 on perception out of 100 – same as Hyderabad University.

Usual suspects

The new rankings are aimed at offering a more transparent system of grading universities than newspaper and magazine rankings, which are often the sole source of information for students and are riddled with conflict of interest issues.

That said, the new ranking hardly does away with issues of sourcing and validating data. The newly introduced system was based on voluntary participation from the universities and data was directly provided by the university administrations. This is something that the report acknowledged on the very first page itself.

“Some of the institutions were definitely casual in supplying the data sought,” it said. “Reliability of an exercise like this depends entirely on the reliability of data. This is clearly an area in which further work is needed.”

That’s not all. The report factored in the problem of using data supplied by universities which can’t be easily verified. Going forward, the report said, the ministry might rely on independent data sources and do away with parameters for which the sources “cannot be reliably identified”.

The problem with data collection also gets reflected in the report when one flips to university specific details where many data fields are either left blank or a placeholder zero is placed indicating lack of verifiable data. For instance, Hyderabad University’s details are missing the teaching experience of its faculty, annual expenditure on co-curricular activities and number of visiting faculty.

However, the problem is not just about incomplete data. Several critics have pointed out that instead of trying to improve public perception of universities through rankings, the governments should focus on improving the quality of education – something that even the previous governments can be accused of not paying enough attention to.

While the last word is still awaited on the efficacy of these rankings and how useful they actually prove to be, the fact that the HRD ministry had to pull back from releasing rankings for Category B institutions due to “inconsistencies” in data across the board means that there’s a lot of catching up to do – first in ensuring quality education and then in assessing it.

[“source-Scroll”]

| M | T | W | T | F | S | S |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | 6 | |

| 7 | 8 | 9 | 10 | 11 | 12 | 13 |

| 14 | 15 | 16 | 17 | 18 | 19 | 20 |

| 21 | 22 | 23 | 24 | 25 | 26 | 27 |

| 28 | 29 | 30 | 31 | |||